Pilot to Measure Clinical Quality: Radiologist-to-Clinician Feedback

As part of a two-fold pilot to collect peer review data for quality improvement at the University of Pittsburgh Medical Center, (UPMC) I designed and built a custom plug-in application on top of the hospital’s existing radiology software. This plug-in allows radiologists to provide feedback to three clinical groups that they interact frequently with: technologists who perform radiology exams, other radiologists whose reports and diagnoses they are required by law to review, and the clinicians who refer patients to radiologists for imaging exams.

Custom Pilot to Measure Clinical Quality: Clinician–to–Radiologist Feeback

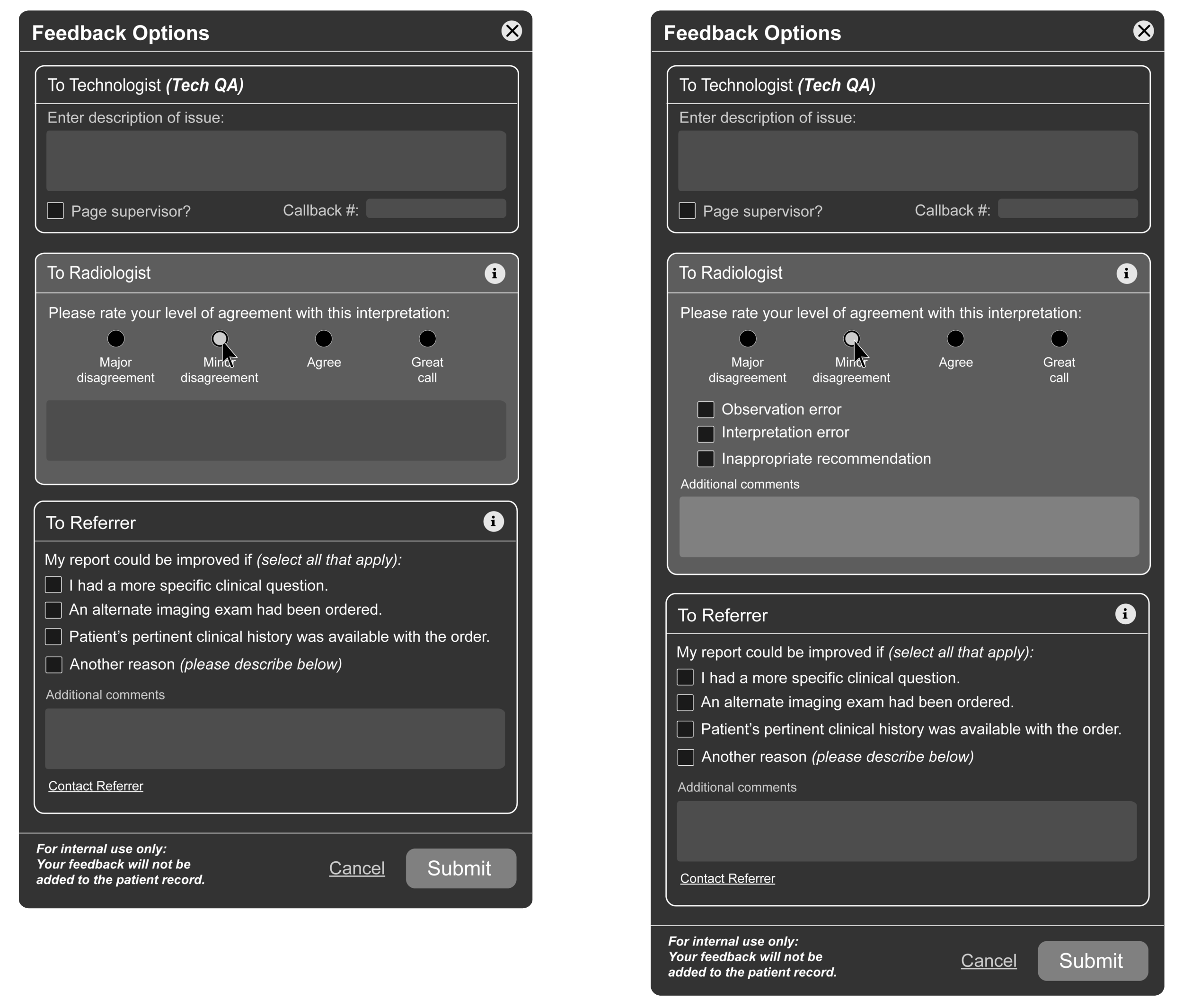

The second portion of the pilot is a custom feedback plug-in for referring clinicians who prescribe imaging exams for patients. This plug-in allows referring clinicians to give radiologists feedback on the value of their radiology reports in helping them provide a well-informed diagnosis for their patients. This was a new idea for UPMC, and one which was met with much enthusiasm from referring providers.

Marketing Video and Materials for UPMC Clinician Pilot Adoption: As the team finished development of the two custom applications, my design team put together promotional and learning materials to encourage referring clinicians in the UPMC network to use the pilot.

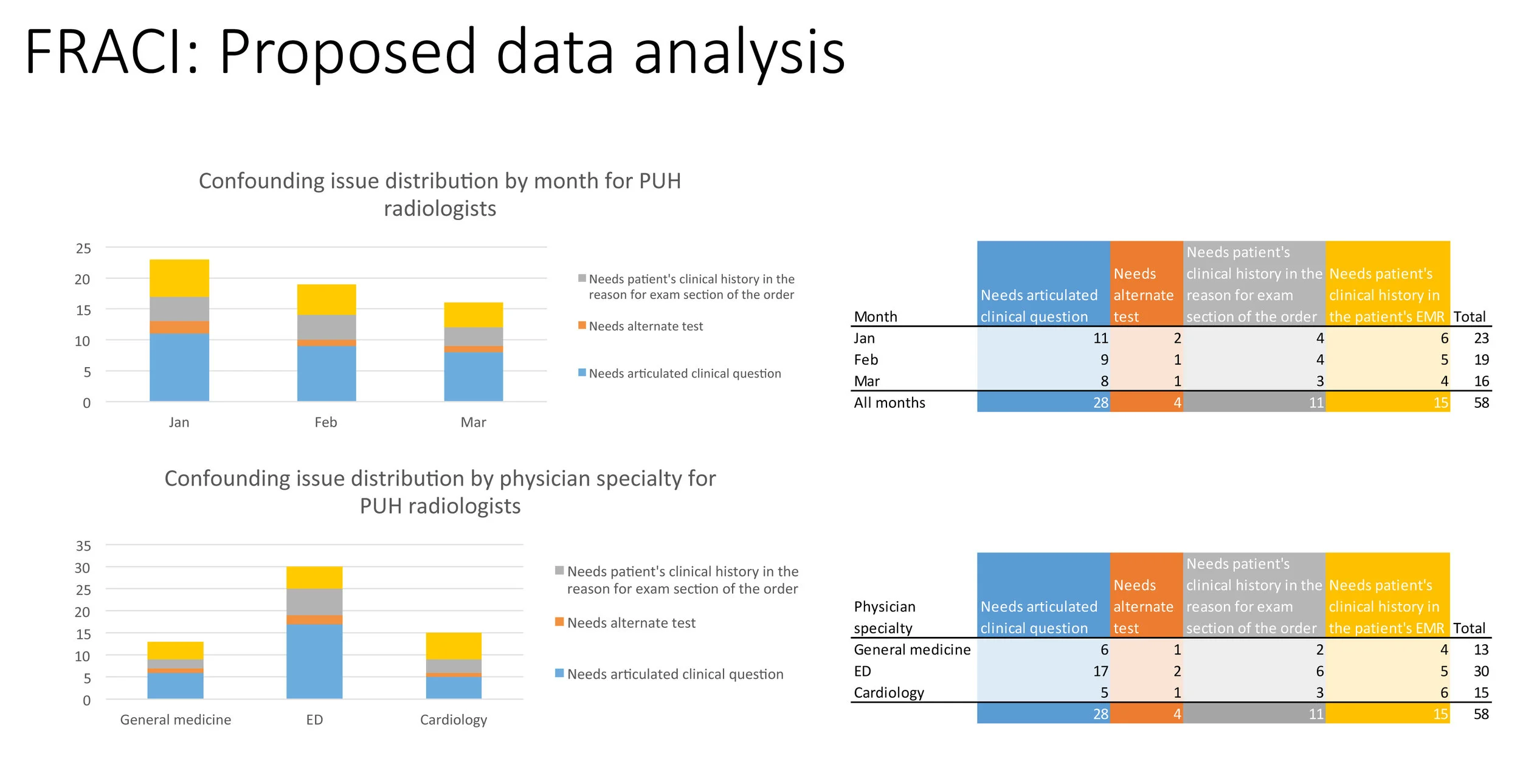

KPIs for Quality Measurement: Feedback Analytics

The Good News: Referring clinicians are happy with radiologists' reports and diagnoses. The Referring-to-Radiologist dataset comprised 88 entries over a period of 9 weeks since launch. Based on the referrer feedback, reports on 57 studies (65%) were deemed very helpful. 11 studies (13%) were reported as somewhat helpful. There were 8 (9%), 6 (7%), and 6 (7%) studies reported to be neutral, unhelpful, and problematic, respectively.

The Bad News: Radiologists are frustrated with the lack of clinical data that referring clinicians provide. The radiologist-to-referring pilot included 154 entries in the same period as named above. There were 97 (63%) entries reporting the lack of pertinent clinical history provided by referring clinicians, 93 of which were CT reports. 10 (6%) wanted referring clinicians to indicate a more specific clinical question, 27 (18%) felt that information given by referring clinicians unhelpful for other reasons. The remaining 20 entries (13%) provided only comments outside of the given reasons.

Research on Radiologist-to-Radiologist Peer Review

In conjunction with the implementation of the feedback pilots, I conducted multiple rounds of user research with radiologists across the country to understand new national quality requirements for radiologist-to-radiologist peer review. Because rad-to-rad feedback is mandated for radiologists to maintain their licensure, this particular aspect of clinical feedback is particularly important. I did research on the needs for a robust peer review system with this research, and found that many current apps being used for rad-to-rad peer review were lacking.

An In-App Ecosystem for Clinician Peer Review

Based on the results coming in from the 2 pilots, and the research I conducted with radiologists about peer review, I designed a peer review and full-loop feedback and analytics system for the apps within GE's Imaging Desktop suite. This system would allow for referring clinicians to provide quality feedback to radiologists in their native viewing application, and enable radiologists to meet new national standards for quality by embedding a peer review feature within Workflow Manager. The data could then be visualized in a personalized analytics dashboard, as well as transmitted per department to national quality and reporting bodies.